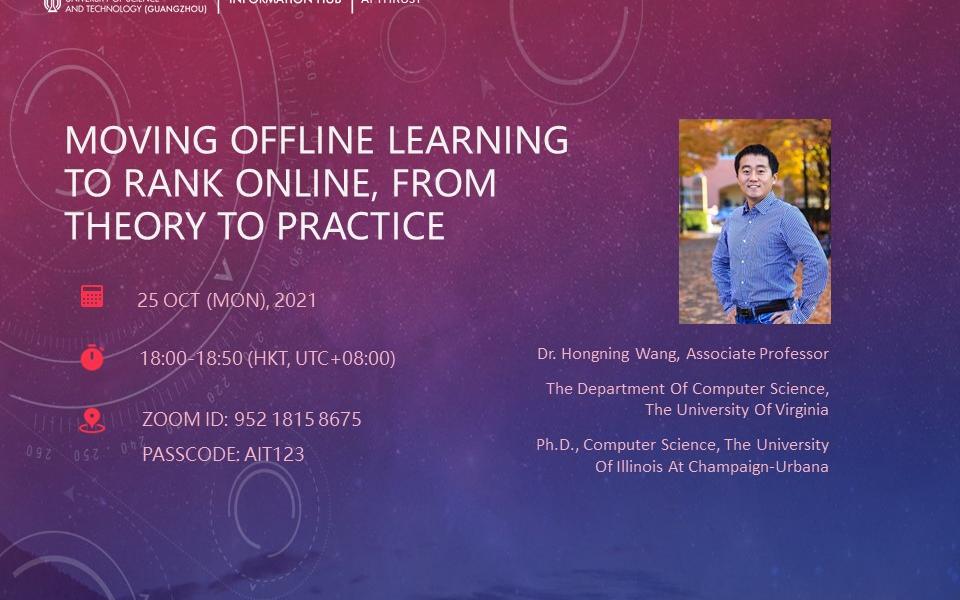

AI Thrust Seminar | Moving Offline Learning to Rank Online, from Theory to Practice

Online Learning to Rank (OL2R) eliminates the need of explicit relevance annotation by directly optimizing the rankers from their interactions with users on the fly. However, the required exploration drives it away from successful practices in offline learning to rank, which limits OL2R's empirical performance and practical applicability.

Stepping away from existing numerous but sub-optimal OL2R algorithms, we take a unique perspective to convert offline learning to rank algorithms online, which empowers us to directly leverage the best practices in the past twenty years' development in offline learning to rank to solve the OL2R problem. In this talk, I will discuss our recent effort in learning a neural ranking model based on user clicks online. We prove that, under standard assumptions, our neural OL2R solution obtains a gap-dependent upper regret bound of O(\log^2(T)), which is defined on the total number of mis-ordered pairs over T rounds. Empirically, it also outperformed a rich set of state-of-the-art OL2R baselines on two large benchmark datasets.

Dr. Hongning Wang is now an Associate Professor in the Department of Computer Science at the University of Virginia. He received his PhD degree in computer science at the University of Illinois at Champaign-Urbana in 2014. His research generally lies in the intersection among machine learning, data mining and information retrieval, with a special focus on sequential decision optimization and computational user modeling. His work has generated over 80 research papers in top venues in data mining and information retrieval areas. He is a recipient of 2016 National Science Foundation CAREER Award, 2020 Google Faculty Research Award, and SIGIR’2019 Best Paper Award.